Background

BEAST provides a bewildering number of models. Bayesians have two techniques to deal with this problem: model averaging and model selection. With model averaging, the MCMC algorithm jumps between different models, and models more appropriate for the data will be sampled more often than unsuitable models (see for example the substitution model averaging tutorial). Model selection on the other hand just picks one model and uses only that model during the MCMC run. This tutorial gives some guidelines on how to select the model that is most appropriate for your analysis.

Bayesian model selection is based on estimating the marginal likelihood: the term forming the denominator in Bayes’ formula. This is generally a computationally intensive task and there are several ways to estimate them. Here, we concentrate on nested sampling as a way to estimate the marginal likelihood as well as the uncertainty in that estimate.

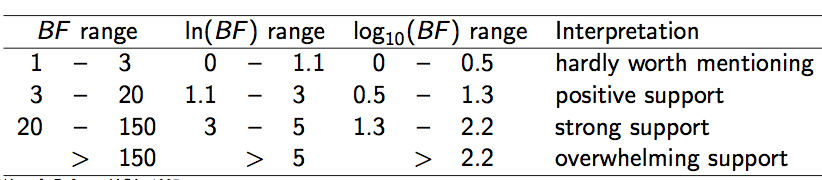

Say, we have two models, and , and estimates of their (log) marginal likelihoods, and . We can calculate the Bayes’ factor (BF), which is the fraction (or the difference in log space). Typically, if is greater than 1, is favoured, otherwise and are hardly distinguishable or is favoured. How much is favoured over can be found in the following table (Kass & Raftery, 1995):

Note that BFs are sometimes multiplied by a factor of 2, so beware of which definition was used when comparing BFs from different publications.

Nested sampling (NS) is an algorithm that works as follows:

- Randomly sample

Npoints (also called particles) from the prior distribution - While (marginal) likelihood estimates have not converged

- Pick the point with the lowest likelihood and save it to the log file

- Replace the point with a new one sampled from the prior via an MCMC chain of

subChainLengthsteps, under the condition that the new likelihood is at least

The main parameters of the NS algorithm are the number of particles (N) and the length of the MCMC chain to sample a replacement particle (subChainLength). To determine N, we first start the NS analysis with N=1. Based on the results of that analysis, we can determine a target standard deviation for marginal likelihood estimates and calculate the required N value using the estimated information measure H and a given formula (more details will follow later in the tutorial).

The subChainLength determines how independent the replacement point is from the point that was saved and is a parameter that needs to be determined by trial and error (see FAQ for details).

Programs used in this tutorial

BEAST2 - Bayesian Evolutionary Analysis Sampling Trees 2

BEAST2 is a free software package for Bayesian evolutionary analysis of molecular sequences using MCMC and strictly oriented toward inference using rooted, time-measured phylogenetic trees (Bouckaert et al., 2014), (Bouckaert et al., 2018). This tutorial uses the BEAST2 version 2.7.x.

BEAUti2 - Bayesian Evolutionary Analysis Utility

BEAUti2 is a graphical user interface tool for generating BEAST2 XML configuration files.

Both BEAST2 and BEAUti2 are Java programs, which means that the exact same code runs on all platforms. For us it simply means that the interface will be the same on all platforms. The screenshots used in this tutorial are taken on a Mac OS X computer; however, both programs will have the same layout and functionality on both Windows and Linux. BEAUti2 is provided as a part of the BEAST2 package so you do not need to install it separately.

Tracer

Tracer is used to summarise the posterior estimates of the various parameters sampled by the Markov Chain. This program can be used for visual inspection and to assess convergence. It helps to quickly view median estimates and 95% highest posterior density intervals of the parameters, and calculates the effective sample sizes (ESS) of parameters. It can also be used to investigate potential parameter correlations. We will be using Tracer v1.7.2.

Practical: Selecting a clock model

In this tutorial, we will analyse a dataset of hepatitis B virus (HBV) sequences sampled through time and concentrate on selecting a clock model. We will select between two popular clock models, the strict clock and the uncorrelated relaxed clock with log-normal-distributed rates (UCLN).

Setting up the strict clock analysis

First thing to do is set up the two analyses in BEAUti, and run them in order to make sure there are differences in the analyses. The alignment can be downloaded here. We will set up a model with tip dates, the HKY substitution model, the coalescent tree prior with constant population size, and a fixed clock rate.

In BEAUti:

- Start a new analysis using the

File > Template > Standardtemplate.- Import HBV.nex using

File > Import alignment.- In the

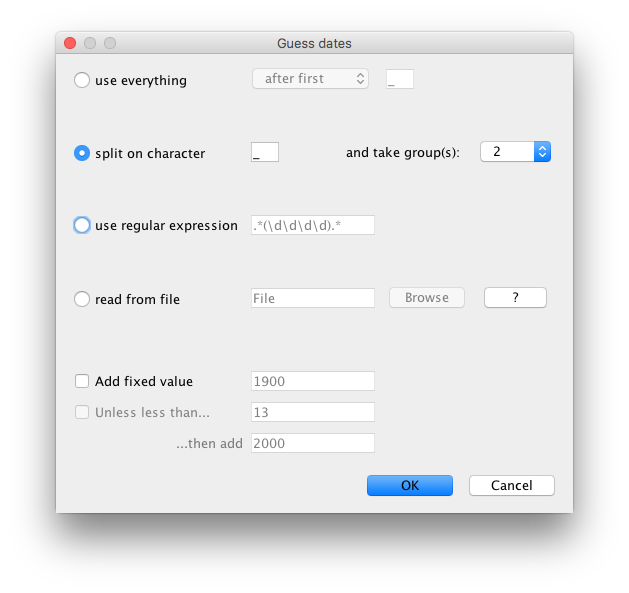

Tip Datespanel, selectUse tip dates, clickAuto-configure, selectsplit on characterand take group 2 (see Fig 2).- In the

Site Modelpanel, selectHKYas the substitution model (Subst Model) and leave the rest as is.- In the

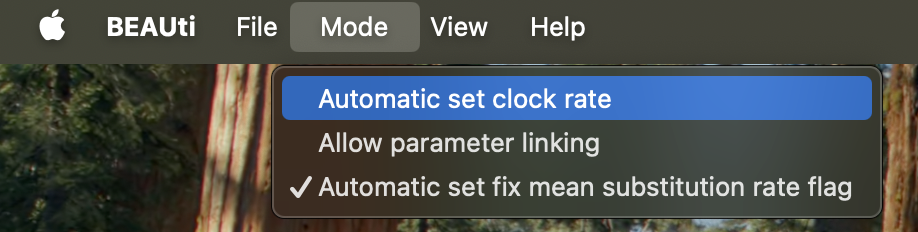

Clock Modelpanel, set theMean clock rateto2E-5. Usually we would want to estimate the clock rate, but to speed up the analysis for the tutorial we will fix the clock rate to2E-5as follows:

- Uncheck

Mode > Automatic set clock rate. Now theestimateentry should not be grayed out anymore (see Fig 3).- Uncheck the

estimatebox next to the clock rate entry.- In the

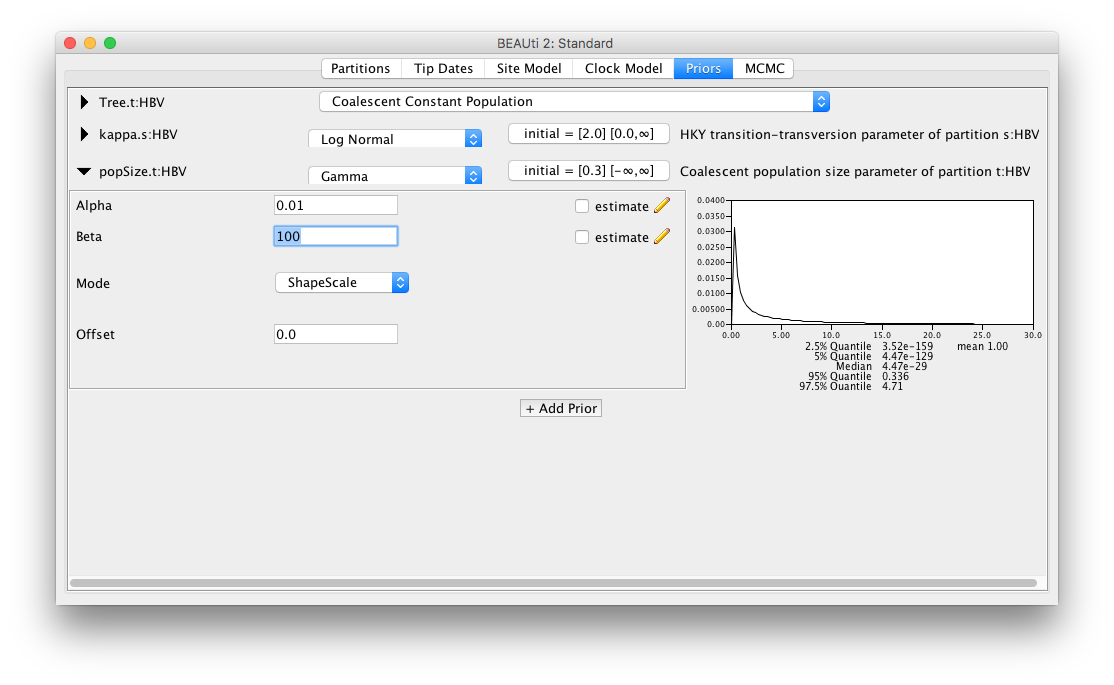

Priorspanel, selectCoalescent Constant Populationas the tree prior. Next, change the prior on the population size parameterpopSizeto aGammadistribution withalpha = 0.01andbeta = 100(Fig 4).- In the

MCMCpanel, change theChain Lengthto 1 million (1E6). You can also rename the output files fortracelogandtreelogto include “Strict” to distinguish them from the relaxed clock analysis.- Save the file as

HBVStrict.xml, and do not close BEAUti just yet!- Run the analysis with BEAST.

Note: Do you have a clock rate prior in the

Priorspanel? If so, the clock rate is being estimated, and you should revisit theClock Modelpanel where the clock is set up!

Setting up the relaxed clock analysis

While you are waiting for the strict clock analysis to finish, you can set up the relaxed clock analysis. This is now straightforward if BEAUti is still open (if BEAUti was closed, open BEAUti and load the file

HBVStrict.xmlbyFile > Load):

- In the

Clock Modelpanel, changeStrict clocktoRelaxed Clock Log Normal. Next, set theMean clock rateto2E-5, and uncheck theestimatebox.- In the

MCMCpanel, replaceStrictin the file names fortracelogandtreelogtoUCLN.- Save the file as

HBVUCLN.xml. Note: Do not useFile > Save; instead, useFile > Save as, otherwise the strict clock XML file will be overwritten.- Run the analysis in BEAST.

Once both analyses have completed, open the log files in Tracer and compare parameter estimates to see whether the two analyses differ substantially. You may also compare the trees in DensiTree.

- Are there any parameters that are very different?

- Do tree heights differ substantially?

- Which analysis is preferable and why?

If there are no substantial differences between the analyses for the question you are interested in, you do not have to commit to one model or another, and you can claim that the results are robust under different models.

However, if there are significant differences, you may want to perform a formal test to see which model is preferred over other models (in other words, which model is a better fit to the given data). In a Bayesian context, in practice this comes down to estimating marginal likelihoods, and calculating Bayes’ factors: the ratios of marginal likelihoods. Nested sampling (Russel et al., 2018) is one way to estimate marginal likelihoods.

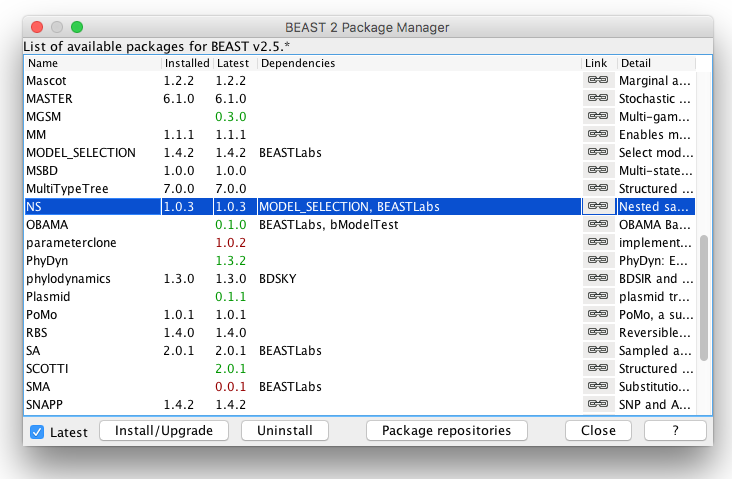

Installing the NS package

To use nested sampling, we first have to install the NS (version 1.2.2) package:

In BEAUti:

- Open the Package Manager by

File > Manage Packages.- Select NS and click

Install/Upgrade(Fig 5).- Restart BEAUti to load the NS package.

Setting up the nested sampling analyses

You can convert an MCMC analysis to an NS analysis using the MCMC2NS tool that comes with the NS package.

In BEAUti:

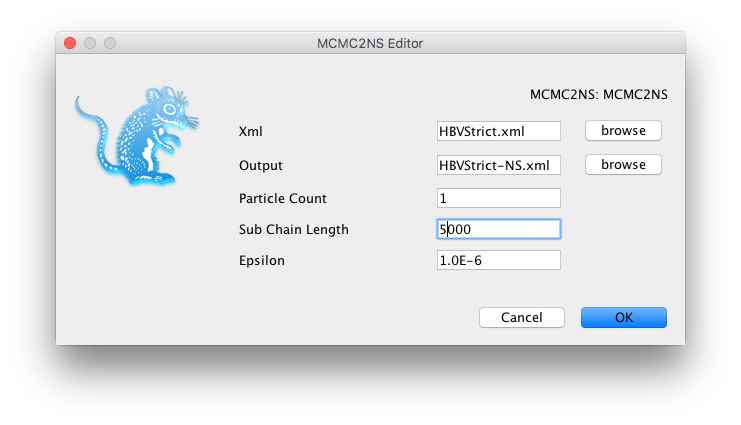

- Open the Package Application Launcher via the

File > Launch Appsmenu.- In the dialog that pops up, select the

MCMC to NS converter (NS)app and clickLaunch.- Select the XML file for the strict clock analysis (

HBVStrict.xml), set the output file asHBVStrict-NS.xmlin the same directory.- Change settings as shown in the Figure 6 below.

- Click

OK, andHBVStrict-NS.xmlshould be created.- Repeat the steps above for the relaxed clock analysis (

HBVUCLN.xml).

Alternatively, you can do the MCMC-to-NS conversion in a text editor as follows:

- Make a copy of the file

HBVStrict.xml/HBVUCLN.xmlasHBVStrict-NS.xml/HBVUCLN-NS.xml.- Start a text editor and in both copied

*NS.xmlfiles, change<run id="mcmc" spec="MCMC" chainLength="1000000">to

<run id="mcmc" spec="nestedsampling.gss.NS" chainLength="1000000" particleCount="1" subChainLength="5000">

- Here the

particleCountrepresents the number of active points (N) used in nested sampling: the more points used, the more accurate the marginal likelihood estimates, but the longer the analysis takes.- The

subChainLengthis the number of MCMC steps taken to get a new point that is independent (enough) from the point that was saved. Longer lengths mean longer runs, but also more independent samples. In practice, running with differentsubChainLengthis necessary to find out which length is most suitable (see FAQ).- Change the file names for the trace and tree log to include

NS(searching forfileName=will get you there fastest).- Save the files, and run with BEAST.

Once the NS analyses have completed, you should see something like this at the end of the BEAST output for the strict clock NS analysis:

Total calculation time: 14.983 seconds

End likelihood: -198.17149973585296

Producing posterior samples

Marginal likelihood: -12428.65341414792 sqrt(H/N)=(10.596753616593245)=?=SD=(10.802109255318992) Information: 112.29118721078201

Max ESS: 17.082043893737673

Processing 228 trees from file.

Log file written to HBVStrict-HBV-NS.posterior.trees

Done!

Marginal likelihood: -12428.505410641856 sqrt(H/N)=(10.58988064783551)=?=SD=(10.328583134047442) Information: 112.14557213540105

Max ESS: 17.443137102014155

Log file written to HBVStrict-NS.posterior.log

Done!

and for the relaxed clock NS analysis:

Total calculation time: 17.254 seconds

End likelihood: -198.22029538745582

Producing posterior samples

Marginal likelihood: -12413.866266847353 sqrt(H/N)=(10.966419757539098)=?=SD=(11.100842819562212) Information: 120.2623622985439

Max ESS: 13.251755407507508

Processing 246 trees from file.

Log file written to HBVUCLN-HBV-NS.posterior.trees

Done!

Marginal likelihood: -12413.74471470421 sqrt(H/N)=(10.959292419373286)=?=SD=(11.010465189987908) Information: 120.10609033333276

Max ESS: 13.62674380195755

Log file written to HBVUCLN-NS.posterior.log

Done!

Nested sampling produces estimates of marginal likelihoods (ML) as well as their standard deviations (SDs). At first sight, the relaxed clock has a (log) ML estimate of about -12414, while the strict clock is much worse at about -12429. However, the standard deviation of both analyses is about 11, making these estimates indistinguishable. Since this is a stochastic process, the exact numbers for your run will differ, but should not be that far apart (typically less than 2 SDs or about 22 log points in 95% of the time).

To get more accurate ML estimates, the number of particles can be increased. In nested sampling, the SD is approximated by , where is the number of particles and the measure of information. The latter is conveniently estimated and displayed in the NS analysis output (see above).

We can conveniently calculate the desired number of particles using the equation above. To aim for an SD of say 2, we need to run the NS analysis again with particles such that , hence should be enough. (Note that I used as estimated by the relaxed clock NS analysis instead of by the strict clock to have some “safety margin”.)

The computation time of nested sampling is linear with the number of particles, so it will take about 30 times longer to run if we change the particleCount from 1 to 30 in the XML:

- Make a copy of the file

HBVStrict-NS.xml/HBVUCLN-NS.xmlasHBVStric-NS30.xml/HBVUCLN-NS30.xml.- Start a text editor and in both copied

*NS30.xmlfiles, change<run id="mcmc" spec="nestedsampling.gss.NS" chainLength="1000000" particleCount="1" subChainLength="5000">to

<run id="mcmc" spec="nestedsampling.gss.NS" chainLength="1000000" particleCount="30" subChainLength="5000">

To save time, this tutorial provides you pre-cooked NS runs using 30 particles: HBVStrict-NS30.log and HBVUCLN-NS30.log.

Analyzing the nested sampling results

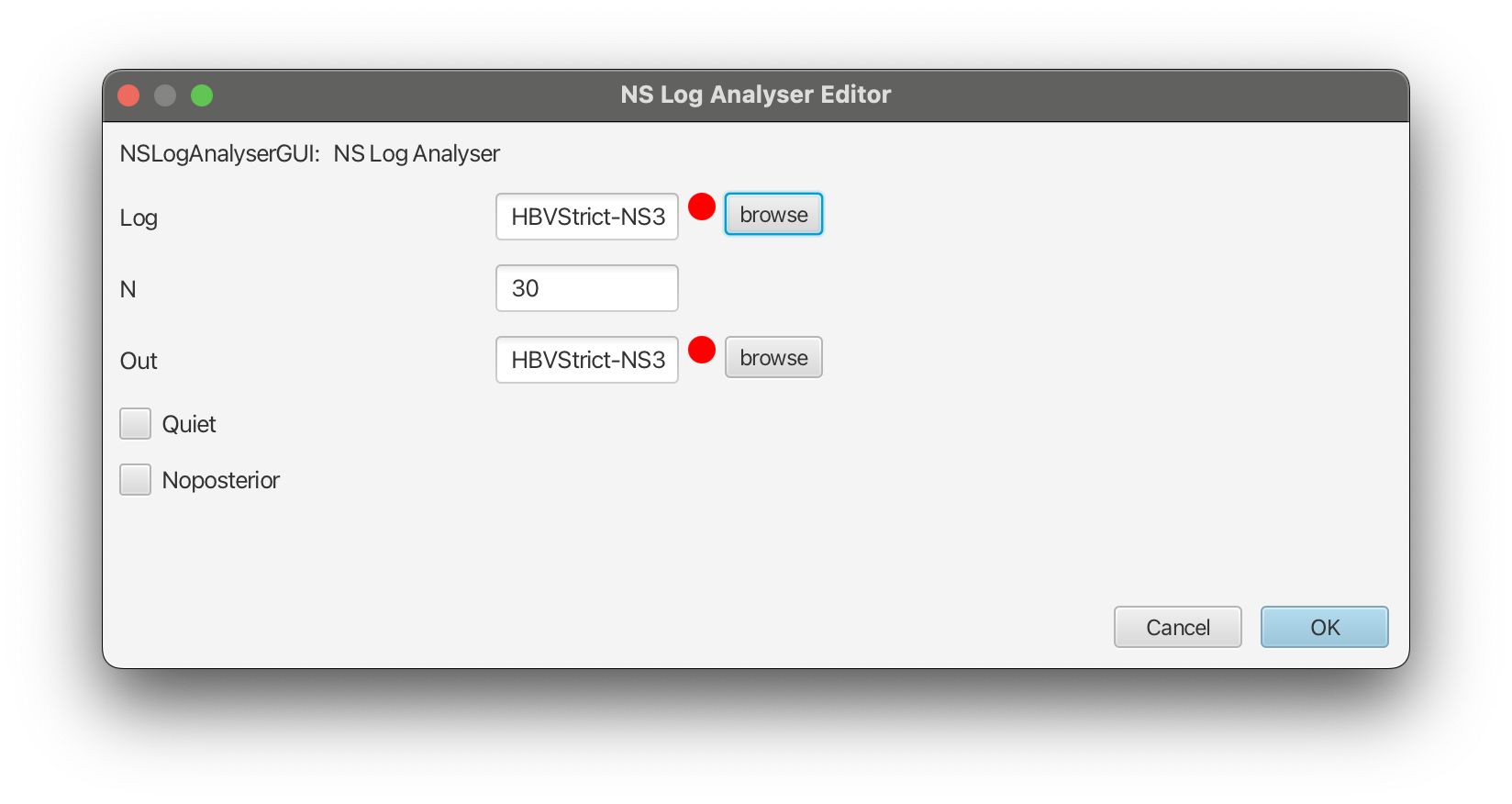

We will now download the pre-cooked log files HBVStrict-NS30.log and HBVUCLN-NS30.log and analyze them using the NSLogAnalyser tool that comes with the NS package:

In BEAUti:

- Open the Package Application Launcher via

File > Launch Apps.- In the dialog, select the

Nested Sampling Log Analyser (NS)app and clickLaunch.- Select the log file for the strict clock analysis (

HBVStrict-NS30.log), set the output file asHBVStrict-NS30.outin the same directory.- Change

N(number of particles) to30as shown in the Figure 7 below.- Click

OK, and after some time the output should be directly printed out in the pop-up window.- Repeat the steps above for the relaxed clock analysis (

HBVUCLN-NS30.log).

The output for the strict clock NS analysis should look like this:

Loading HBVStrict-NS30.log, burnin 0%, skipping 0 log lines

|---------|---------|---------|---------|---------|---------|---------|---------|

*********************************************************************************

Marginal likelihood: -12426.424659219112 sqrt(H/N)=(1.9487197469883364)=?=SD=(1.8689265005123525) Information: 113.92525956906857

Max ESS: 415.691078202295

Calculating statistics

|---------|---------|---------|---------|---------|---------|---------|---------|

********************************************************************************

#Particles = 30

item mean stddev

posterior -12509.4 3.380924

likelihood -12312.4 3.312481

prior -196.985 1.44903

treeLikelihood -12312.4 3.312481

Tree.height 3470.464 130.0832

Tree.treeLength 20696.58 559.9497

kappa 2.698771 0.1626

freqParameter.1 0.240524 0.006909

freqParameter.2 0.270687 0.00763

freqParameter.3 0.219939 0.006023

freqParameter.4 0.268849 0.007123

popSize 2325.738 298.5557

CoalescentConstant -163.330 2.961035

Done!

This gives us an ML estimate of -12426.4 with an SD of 1.95 for the strict clock model, slightly better than the SD of 2 we aimed for.

For the relaxed clock NS analysis, we get:

Marginal likelihood: -12417.941209427103 sqrt(H/N)=(2.037101812671995)=?=SD=(2.0736988078248095) Information: 124.49351385574582

This gives us an ML of -12417.9 with an SD of 2.04 for the uncorrelated log-normal relaxed clock model.

Therefore, the of the relaxed clock versus the strict clock is , which is more than twice the sum of the SDs (), which can be considered as reliable evidence in favour of the log-normal relaxed clock model over the strict clock. Note that judging from Figure 1, this amounts to overwhelming support for the relaxed clock.

Nested sampling FAQ

The analysis prints out multiple ML estimates with their SDs. Which one should I choose?

The difference between the estimates is due to the ways they are estimated from the nested sampling run. Since these are estimates that require random sampling, they differ from one estimate to another. When the standard deviation is small, the estimates will be very close, but when the standard deviations is quite large, the ML estimates can substantially differ. Regardless, any of the reported estimates are valid estimates, but make sure to report them with their standard deviation.

How do I know the sub-chain length is large enough?

NS works in theory if and only if the points generated at each iteration are independent. If you already did an MCMC run and know the effective sample size (ESS) for each parameter, to be sure every parameter in every sample is independent you can take the length of the MCMC run divided by the smallest ESS as the sub-chain length. This tends to result in quite large sub-chain lengths.

In practice, we can get away much shorter sub-chain lengths, which you can verify by running multiple NS analyses with increasing sub-chain lengths. If the ML and SD estimates do not substantially differ, shorter sub-chain length will be sufficient.

How many particles do I need?

To start, use only a few particles to get a sense of the information , which is estimated in the NS analysis. If you want to compare two models, the difference between their marginal likelihood estimates, and , needs to be at least to make sure the difference is not due to randomization.

If the difference is larger, you do not need more particles. If the difference is smaller, you can estimate how much the SD estimates must shrink to get a difference that is sufficiently large. Since , we have , where comes from the NS analysis with a few particles. Next, you can run the analysis again with the increased number of particles and see if the difference becomes large enough.

If the difference between marginal likelihood estimates is less than 2, the hypotheses may not be distinguishable – in terms of Bayes’ factors, the difference is barely worth mentioning.

Is NS faster than path sampling/stepping stone (PS/SS)?

This depends on many things, but in general, depends on how accurate the estimates should be. For NS, we get an estimate of the SD, which is not available for PS/SS. If the hypotheses have very large differences in MLs, NS requires very few (maybe just 1) particle, and will be very fast. If differences are smaller, more particles may be required, and the run-time of NS is linear in the number of particles.

The parallel implementation makes it possible to run many particles in parallel, giving a many-particle estimate in the same time as a single particle estimate (PS/SS can be parallelised by steps as well).

The output is written on screen, which I forgot to save. Can I estimate them directly from the log files?

The NS package has a NSLogAnalyser application that you can run via the menu File > Launch Apps in BEAUti – a window pops up where you select the NSLogAnalyser, and a dialog shows you various options to fill in. You can also run it from the command line on OS X or Linux using

/path/to/beast/bin/applauncher NSLogAnalyser -N 1 -log xyz.log

where the argument after N is the particleCount you specified in the XML, and xyz.log the trace log produced by the NS run.

Why are some NS runs longer than others?

Nested sampling stops automatically when the accuracy in the ML estimate cannot be improved upon. Because it is a stochastic process, some analyses get there faster than others, resulting in different run times.

Why are the ESSs so low when I open a log file in Tracer?

An NS analysis produces two trace log files: one for the nested sampling run (say myFile.log) and one with the posterior sample (myFile.posterior.log).

The ESSs in Tracer of log files with the posterior samples are meaningless, because the log file is ordered using the nested sampling run. If you look at the trace of the Likelihood, it should show a continuous increasing function. It is not quite clear how to estimate ESSs of a nested sampling run yet, though the number of entries in the posterior log is equal to the maximum theoretical ESS, which is almost surely an overestimate.

Useful Links

- Bayesian Evolutionary Analysis with BEAST 2 (Drummond & Bouckaert, 2014)

- BEAST 2 website and documentation: http://www.beast2.org/

- Nested sampling website and documentation: https://github.com/BEAST2-Dev/nested-sampling

- Join the BEAST user discussion: http://groups.google.com/group/beast-users

Relevant References

- Kass, R. E., & Raftery, A. E. (1995). Bayes factors. Journal of the American Statistical Association, 90(430), 773–795.

- Bouckaert, R., Heled, J., Kühnert, D., Vaughan, T., Wu, C.-H., Xie, D., Suchard, M. A., Rambaut, A., & Drummond, A. J. (2014). BEAST 2: a software platform for Bayesian evolutionary analysis. PLoS Computational Biology, 10(4), e1003537. https://doi.org/10.1371/journal.pcbi.1003537

- Bouckaert, R., Vaughan, T. G., Barido-Sottani, J., Duchene, S., Fourment, M., Gavryushkina, A., Heled, J., Jones, G., Kuhnert, D., De Maio, N., & others. (2018). BEAST 2.5: An Advanced Software Platform for Bayesian Evolutionary Analysis. BioRxiv, 474296.

- Russel, P. M., Brewer, B. J., Klaere, S., & Bouckaert, R. R. (2018). Model selection and parameter inference in phylogenetics using Nested Sampling. Systematic Biology, 68(2), 219–233.

- Drummond, A. J., & Bouckaert, R. R. (2014). Bayesian evolutionary analysis with BEAST 2. Cambridge University Press.

Citation

If you found Taming the BEAST helpful in designing your research, please cite the following paper:

Joëlle Barido-Sottani, Veronika Bošková, Louis du Plessis, Denise Kühnert, Carsten Magnus, Venelin Mitov, Nicola F. Müller, Jūlija Pečerska, David A. Rasmussen, Chi Zhang, Alexei J. Drummond, Tracy A. Heath, Oliver G. Pybus, Timothy G. Vaughan, Tanja Stadler (2018). Taming the BEAST – A community teaching material resource for BEAST 2. Systematic Biology, 67(1), 170–-174. doi: 10.1093/sysbio/syx060